Blog “Using Evidence to Improve and Scale Up Development Program in Education: The Case of the Indian NGO Pratham”

2024.04.05

At the JICA Ogata Sadako Research Institute for Peace and Development (JICA Ogata Research Institute), researchers with diverse experience and backgrounds are forging partnerships with a wide range of stakeholders and partners. In this blog post series, we share their knowledge and perspectives gained from their research activities. This time, Maruyama Takao, senior research fellow at the institute, discusses how development agencies can use impact evaluation and evidence to improve their program from a case study of an NGO in India.

Author:

MARUYAMA Takao, Senior Research Fellow

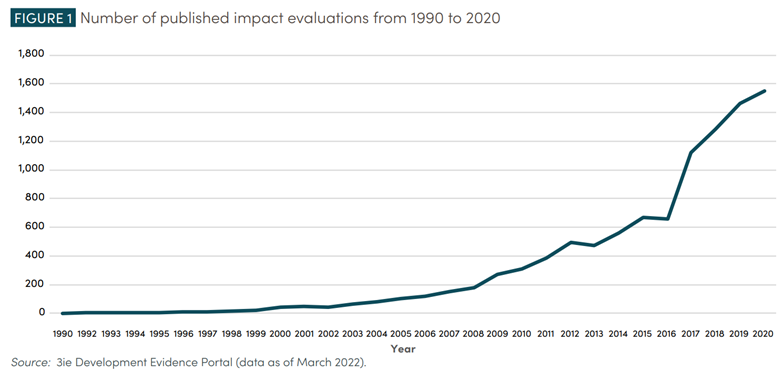

In the field of international development, impact evaluation methods to verify the effectiveness of intervention, such as randomized controlled trials (RCTs), started to gain popularity in the 2000s. Over the years, impact evaluation has become a common method in this field. The number of published papers on impact evaluation in low- and lower-middle-income countries was 257 during 2000-2004, but this increased by more than 6.5 times to 1,678 for 2010-2015 (Sabet & Brown, 2018). Evidence continued to be accumulated in various topics and the number of publications for 2016-2020 reached 3,269, almost doubling from the previous five years (3ie, 2023).

Fig. 1. Number of new publications on impact evaluation per year in the international development field

Source: Kaufman et al., 2021

Note: The figure shown above is from Kaufman et al. (2021). In addition to publications on low- or lower-middle-income countries, those on upper-middle income countries were included in the count in the figure.

While the number of publications of impact evaluation has increased, are development agencies using impact evaluation and evidence well to improve and scale up their programs? Papers published in academic journals often use technical language and are not easily readable for development agency staff. While systematic reviews provide overviews of the evidence available in each field or relevant to each issue, they do not include the details in the description of the interventions for practical application.

In the field of educational development, an Indian NGO, “Pratham,” introduced impact evaluation in the early 2000s and has used evidence to improve the effectiveness of its programs and scale them up. Pratham was founded by two university professors in Mumbai in the mid-1990s, and since then, it has grown into an organization with over 6,000 staff members. Pratham operates programs in various states in India. In 2018, Pratham implemented or supported programs to improve child literacy in 21 states in India, and approximately 21 million children are estimated to have benefited from them (Pratham Education Foundation, 2019).

Pratham identified a learning agenda that is critical to its strategy and used impact evaluation to address it. A learning agenda is a set of questions that are directly related to an organization’s strategy and operation. When the questions are answered, the organization would become more efficient and effective (USAID, 2021).

For example, in the mid-2000s, the organization was in the process of expanding its programs from cities such as Mumbai to rural areas. The learning agenda at the time was whether it was possible to run a program to improve child literacy and numeracy, which was successfully operated in cities, in a different context of rural villages where the environment was less structured and by local community volunteers instead of Pratham staff. To address the learning agenda, in collaboration with researchers, Pratham designed interventions leveraging village volunteers and verified their impact through RCT (Banerjee et al., 2010). Evidence from the RCT supported Pratham in getting funding, and the program was scaled up widely across rural India by leveraging village volunteers (Dutt et al., 2016).

Pratham then turned their efforts to integrating the program in public schools through collaboration with state governments. How could the literacy and numeracy program developed by Pratham get implemented by public school teachers effectively? To address the learning agenda, Pratham designed a set of interventions which included the distribution of teaching and learning materials, teacher training, and support offered by volunteer staff. Their effectiveness was verified by RCT (Banerjee et al., 2016).

The RCT results demonstrated that neither simple material distribution, teacher training, support from volunteers in class, nor the combination were able to make a change in teachers’ behavior and student learning. An interview survey targeting teachers showed that while teachers positively evaluated Pratham’s pedagogy, they did not have time to implement it on top of completing the mandatory curriculum (Banerji, 2019; Duflo, 2020). Based on this, Pratham developed the strategy of securing timeslots in public schools for program in consultation with the state government and developing the capacity of an existing structure of the state government in the program implementation. Pratham conducted another RCT to verify the effectiveness of the state government partnership model. Based on evidence, Pratham developed government partnership programs in different states (Banerji, 2019).

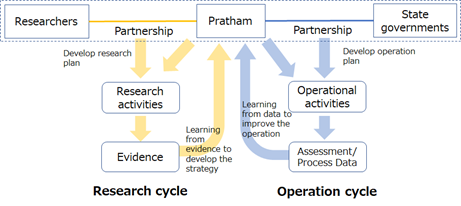

Fig. 2 conceptualizes evidence use by Pratham (Maruyama, 2023). The right side of this figure shows the program-operation cycle of Pratham. Programs are implemented either directly by Pratham or indirectly through partnerships with state governments. In this cycle, data on child literacy and numeracy are collected. The left side shows the research cycle. Pratham conducts research activities such as impact evaluation through partnerships with researchers, as well as regular surveys on child literacy and numeracy in India through partnerships with various organizations and the help of volunteer staff. The survey results are publicly shared (ASER Centre, 2015).

Pratham measures the educational issues such as current state of child literacy and numeracy and gains an understanding of the issue through research. Based on data collected through surveys and program implementation, Pratham develops solutions for the identified issues. Upon identifying the learning agenda with regards to its strategy, Pratham verifies the effectiveness of these solutions through impact evaluation (research) and feeds the results back into its programs.

Fig. 2 The combination of operation and research cycles at Pratham

Source: Maruyama, 2023

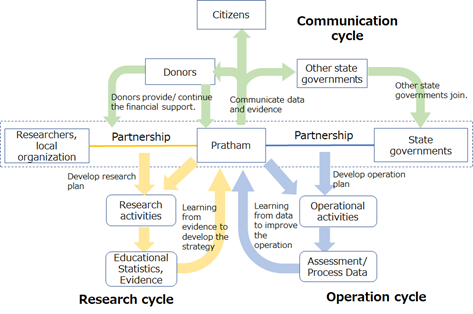

Furthermore, Pratham widely communicates the data and evidence from programs and research to gain and sustain support from donors and partnerships with state governments. In Fig. 3, the communication cycle of data and evidence to various stakeholders is combined with the cycles in Fig. 2. The current state of child literacy and numeracy in Indian states revealed by research raises people’s awareness on the importance of the identified issues. The solution developed through programs and impact evaluation (research) puts forward measures that can address the issues. By linking the operation, research, and communication cycles of data and evidence, Pratham has improved its operations, and it also expanded support and partners for scaling up programs.

Fig. 3 Search, learning, and communication cycle using evidence

Source: Maruyama, 2023

The integrated cycle of search, learning, and communication, conceptualized from Pratham’s case, suggests a way for development agencies to use evidence effectively. For further detail, please read publications such as Maruyama (2023) which are shown in the reference list.

Note: This case study on evidence use by Pratham was supported by JSPS KAKENHI for Scientific Research (C) Grant Number JP20K02559.

ASER Centre. 2015. ASER Assessment and Survey Framework. Retrieved from http://img.asercentre.org/docs/Bottom%20Panel/Key%20Docs/aserassessmentframeworkdocument.pdf

.

Banerjee, Abhijit, Rukmini Banerji, Esther Duflo, Rachel Glennerster and Stuti Khemani. 2010. “Pitfalls of Participatory Program: Evidence from a Randomized Evaluation in Education in India.” American Economic Journal: Economic Policy, 2:1: 1-30.

Banerjee, Abhijit, Rukmini Banerji, James Berry, Esther Duflo, Harini Kannan, Shobini Mukerji, Marc Shotland, and Michael Walton. 2016. “Mainstreaming an Effective Intervention: Evidence from Randomized Evaluations of "Teaching at the Right Level" in India.” NBER Working Paper, No. 22746.

Banerji, Rukmini. 2019. “Banerjee and Duflo’s journey with Pratham.” Ideas for India, Nov. 13, 2019.

Duflo, Esther. 2020. “Field Experiments and the Practice of Policy.” American Economic Review, 110 (7): 1952-1973.

Dutt, Shushmita Chatterji, Christina Kwauk, and Jenny Perlman Robinson. 2016. Pratham’s Read India Program: Taking small steps toward learning at scale. Center for Universal Education.

International Initiative for Impact Evaluations (3ie). 2023. Development Evidence Portal. https://developmentevidence.3ieimpact.org/

Kaufman, Julia, Amanda Glassman, Ruth Levine, and Janeen Madan Keller. 2022. Breakthrough to Policy use: Reinvigorating Impact Evaluation for Global Development. Center for Global Development.

Maruyama, Takao. 2023. “Using Evidence to Improve and Scale up Development Program in Education: A Case Study from India” World Development Perspectives, Vol. 32: 100542. https://doi.org/10.1016/j.wdp.2023.100542

.

Pratham Education Foundation 2019. Annual reports 2018-19. Pratham Education Foundation.

Sabet, Shayda Mae and Annette N. Brown. 2018. “Is impact evaluation still on the rise? The new trends in 2010–2015.” Journal of Development Effectiveness, 10:3: 291-304.

USAID 2021. “Learning Lab: CLA Toolkit.”

Retrieved from https://usaidlearninglab.org/qrg/learning-agenda

.

Note: This blog expresses the individual views of the author, not the views of JICA nor of the JICA Ogata Sadako Research Institute for Peace and Development.

About the Author:

Maruyama Takao is a senior research fellow at the JICA Ogata Research Institute since 2022. He joined JICA in 2002 and has worked at various sections, including the Africa Department, Senegal Office and Human Development Department.

事業事前評価表(地球規模課題対応国際科学技術協力(SATREPS)).国際協力機構 地球環境部 . 防災第一チーム. 1.案件名.国 名: フィリピン共和国.

事業事前評価表(地球規模課題対応国際科学技術協力(SATREPS)).国際協力機構 地球環境部 . 防災第一チーム. 1.案件名.国 名: フィリピン共和国.

事業事前評価表(地球規模課題対応国際科学技術協力(SATREPS)).国際協力機構 地球環境部 . 防災第一チーム. 1.案件名.国 名: フィリピン共和国.

事業事前評価表(地球規模課題対応国際科学技術協力(SATREPS)).国際協力機構 地球環境部 . 防災第一チーム. 1.案件名.国 名: フィリピン共和国.

事業事前評価表(地球規模課題対応国際科学技術協力(SATREPS)).国際協力機構 地球環境部 . 防災第一チーム. 1.案件名.国 名: フィリピン共和国.